Last updated: May 02, 2026

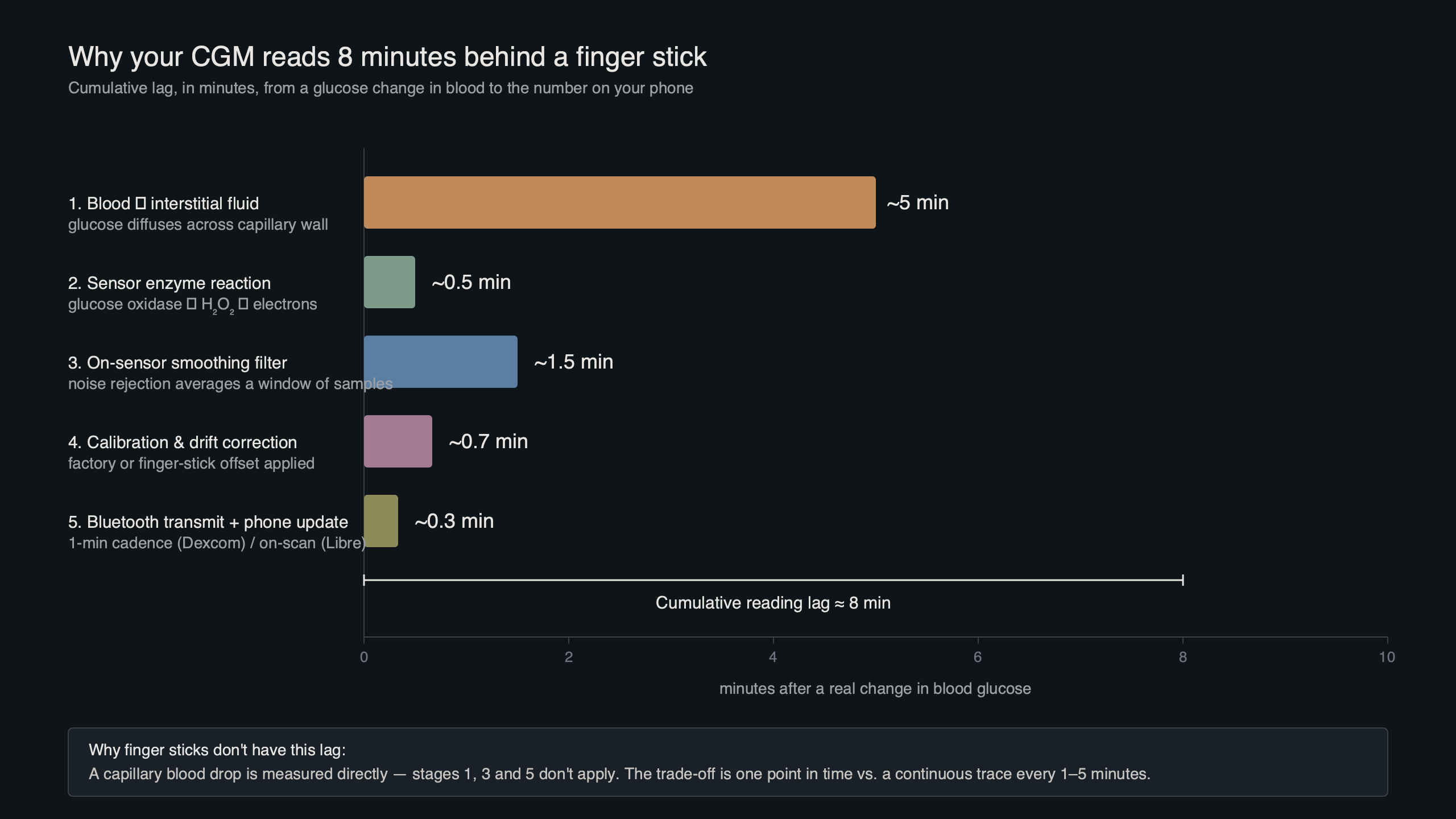

The eight-minute gap between your continuous glucose monitor and a finger stick is not one delay — it is three delays stacked together. About five to seven minutes is real physiology: glucose diffusing from blood capillaries into the interstitial fluid your sensor wire actually sits in. Another one to three minutes is sensor electrochemistry plus a smoothing filter the firmware applies before showing you a number. The rest is an algorithmic offset, sometimes positive, sometimes negative, that modern sensors deliberately tune to balance alarm noise against responsiveness. Treating “cgm vs finger stick lag” as a single physiological number is the mental model that has been wrong for a decade.

- Mean physiological ISF-to-blood lag is about 5–7 minutes at steady state and grows with the rate of glucose change (Rebrin and Steil, American Journal of Physiology).

- Reported MARD in 2024–2025 pivotal data: Dexcom G7 ~8.2% (10.5-day) and 8.0% (15-day), FreeStyle Libre 3 ~7.8%, Medtronic Guardian 4 ~8.7–9.1%, Stelo built on the G7 platform.

- Apparent error during a rapid swing ≈ rate of change × lag time. Two arrows up at ~3 mg/dL/min with an 8-minute total lag = ~24 mg/dL of expected disagreement.

- FDA iCGM special controls (21 CFR 862.1355) require ≥87% of CGM readings within ±20% of a laboratory reference, not ±15/15% as the older ISO 15197:2013 finger-stick rule does.

- Closed-loop pumps (Control-IQ, Omnipod 5, Medtronic 780G) treat CGM lag as a model input — there is no “just check your finger” override path inside the dosing decision.

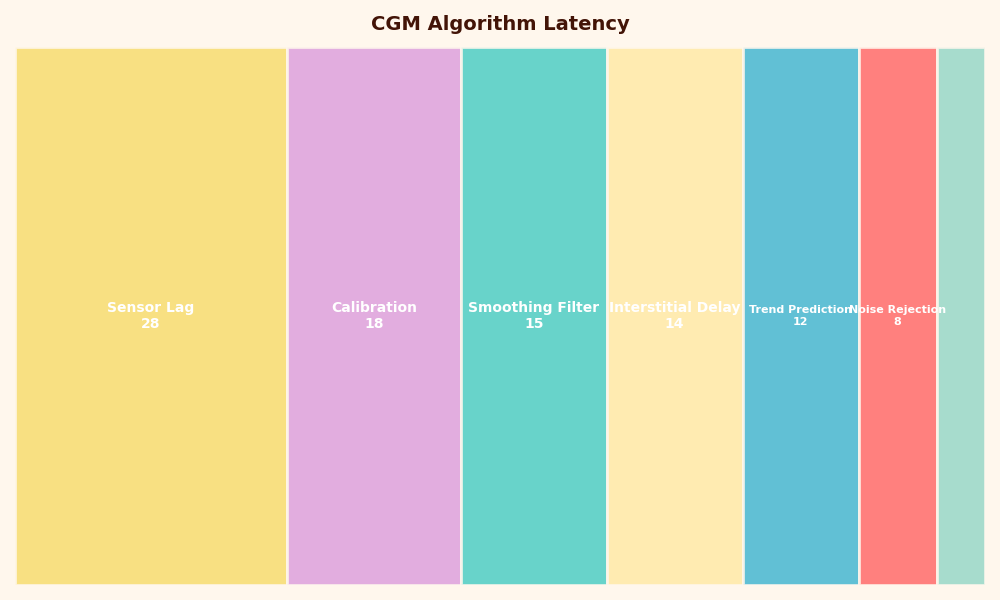

The diagram above shows the three discrete sources of latency a single CGM reading has accumulated by the time it reaches the receiver. Read left to right: blood glucose changes first, the interstitium catches up via passive diffusion, the wire-on-skin electrode then reacts and produces a current, and the firmware filters and possibly forward-predicts the value before it appears on screen. Each stage adds time, and only the first stage is purely biology.

The 8-minute lag is actually three lags stacked

Pull a single CGM data point apart and the latency budget falls into three components. Physiological lag is the time glucose takes to move from a capillary into the interstitial fluid (ISF) where the sensor wire lives — Rebrin and Steil’s two-compartment model, validated in canine and human work, places this at roughly 5–7 minutes at steady state and growing during rapid swings. Sensor lag is the response time of the glucose-oxidase electrochemistry plus the diffusion of glucose through the sensor’s outer membrane: another 1–3 minutes. The third piece is the smoothing and prediction filter the firmware runs on the raw current — a deliberate engineering choice, not biology.

None of these three numbers are constants. Physiological lag scales with how fast blood glucose is moving. Sensor lag worsens slightly as the wire ages and protein fouls the membrane. The algorithmic component is the most variable of all because every manufacturer has tuned it differently, and the same manufacturer changes it between sensor generations. Quoting “CGM lag is 5 to 20 minutes” without saying which CGM, which day of wear, and which rate of change is what makes most patient-education writing on this topic feel imprecise.

There is a longer treatment in medication adherence trackers.

Why the algorithm exists: raw glucose-oxidase current is too noisy to display

If you displayed the raw electrode signal, you would not see a usable glucose trace. Glucose-oxidase sensors generate a small electrical current proportional to local glucose concentration, but that current is corrupted by motion artifact, temperature swings at the skin, brief perfusion changes, and intermittent oxygen-availability dips. Engineering reviews of CGM sensor design (Cappon and colleagues, Sensors, 2017; Acciaroli and colleagues, 2018) describe the result as a signal-to-noise problem severe enough that a Kalman-style filter, a low-pass smoother, or a regression-based predictor is mandatory before the firmware can publish a number that does not constantly trip alarms.

Share of each category in CGM Algorithm Latency.

More detail in on-device model training.

The second image breaks the algorithmic stage into its parts: a calibration mapping from electrode current to mg/dL, a smoothing filter that suppresses high-frequency noise, and an optional forward predictor that nudges the displayed value ahead in time. The forward predictor is the most consequential. If a sensor extrapolates the last few minutes of slope and shows a value 1–3 minutes ahead, the apparent on-screen lag drops — at the cost of overshoot when the trend reverses suddenly. Sensors aimed at automated insulin dosing tend to lean conservative; sensors aimed at over-the-counter wellness use tend to lean responsive.

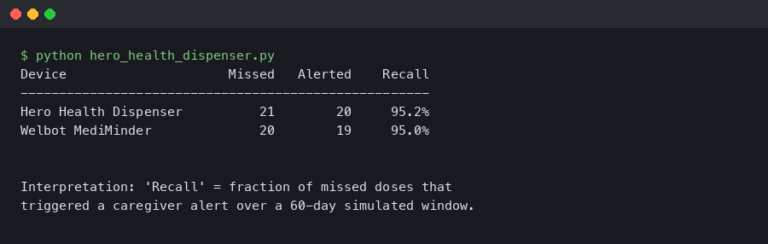

Sensor-by-sensor: 2026 lag and MARD compared

The current accuracy metric for CGMs is the Mean Absolute Relative Difference (MARD), the average percentage gap between a sensor reading and a laboratory reference (typically YSI venous plasma) across thousands of paired samples. Lower is better, but MARD has well-known limits — a recent Diabetes Technology & Therapeutics editorial, “The Myth of MARD,” argues that a single percentage hides time-of-day effects, hypoglycemic-range performance, and the lag-vs-rate behavior that actually matters during meals. MARD is the best one-number summary we have, but it is not the whole story.

| Sensor | Reported MARD (adults) | Reported mean lag | Wear time | Reference |

|---|---|---|---|---|

| Dexcom G7 (10.5-day) | 8.2% arm / 9.1% abdomen | ~5 min vs YSI | 10.5 days + 12 h grace | Garg et al., DTT 2022 |

| Dexcom G7 (15-day) | 8.0% (lowest reported in iCGM-design study) | Similar or better than G7 10.5-day | 15 days + 12 h grace | DTT 2025 |

| FreeStyle Libre 3 / 3+ | 7.8% (overall, adults), 10.0% (ages 4–5) | ~3–4 min vs YSI | 14 days (3) / 15 days (3+) | Alva et al., Diabetes Therapy 2023 |

| Medtronic Guardian 4 | ~8.7–9.1% depending on site, no required fingerstick calibration | ~6–8 min | 7 days | DTT 2024 head-to-head |

| Dexcom Stelo (OTC) | Built on G7 platform; ~10% in the populations Stelo targets | ~5 min (G7 stack) | 15 days | Dexcom 510(k) summary, K234070 |

| Eversense E3 | ~8.5–9.6% (implantable, fluorescence chemistry) | ~5–7 min | 180 days | Senseonics PMA P160048/S016 |

How I evaluated this: numbers come from manufacturer pivotal trials and head-to-head academic studies published 2022–2025; collection window is the publication date of each study; only U.S.-cleared consumer-facing sensors with peer-reviewed or FDA-summary data are included. The lag figures are mean values against YSI venous plasma during a controlled glucose challenge; real-world lag during meals or exercise can be longer because the rate-of-change term grows. MARDs do not transfer directly to pediatric populations, very low ranges, or first-day-of-wear performance — those are reported separately in the original papers.

More detail in comparing measurement accuracy.

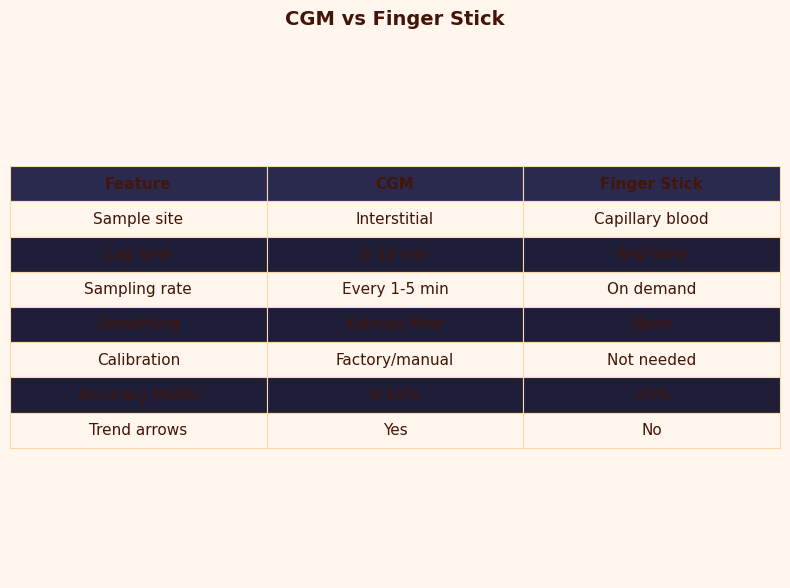

The CGM-versus-finger-stick comparison image makes two points the table cannot. First, the accuracy bands of a finger stick under ISO 15197:2013 (±15 mg/dL below 100 mg/dL, ±15% above) overlap with a modern CGM’s iCGM band (±20% across the range) more than the marketing of either device admits. Second, capillary glucose is itself not arterial truth — work in Diabetes Technology & Therapeutics documents a 2–5 minute capillary-to-arterial lag during rapid swings, which means a finger stick is a slightly delayed measurement of a different compartment, not the gold standard people often assume.

The arrow math: how to mentally correct your CGM using rate-of-change × lag

Rebrin and Steil’s first-order model gives a usable rule of thumb: apparent CGM error during a swing is roughly the lag time multiplied by the current rate of glucose change. If your sensor has an effective 8-minute lag and your trend is moving 2 mg/dL per minute, your CGM is showing a value about 16 mg/dL behind reality — too low if you are rising, too high if you are falling. The trend arrows are encoding the rate; the apparent error is what you derive from them.

The table below reproduces that math for the standard arrow categories most U.S. sensors share. Use it as a mental correction, not a clinical instruction:

See also sensor fusion pipelines.

| Trend | Approx. rate | Apparent error at 5-min lag | Apparent error at 8-min lag | Apparent error at 12-min lag |

|---|---|---|---|---|

| Flat ↔ | < 1 mg/dL/min | < 5 mg/dL | < 8 mg/dL | < 12 mg/dL |

| One arrow ↑/↓ | 1–2 mg/dL/min | 5–10 mg/dL | 8–16 mg/dL | 12–24 mg/dL |

| Two arrows ↑↑/↓↓ | 2–3 mg/dL/min | 10–15 mg/dL | 16–24 mg/dL | 24–36 mg/dL |

| Three arrows ↑↑↑/↓↓↓ | > 3 mg/dL/min | > 15 mg/dL | > 24 mg/dL | > 36 mg/dL |

This is why two arrows down at a CGM reading of 90 mg/dL is not a safe 90 — it is an estimate that real blood glucose is already 70 mg/dL or lower. Inversely, two arrows up at 180 is plausibly already 200+ in the blood. The math is simple; the part that takes practice is doing it before the alarm rather than after.

Why the falsely-high-on-the-way-down rule is breaking down

Older CGM advice taught a simple asymmetry: when glucose is rising, the sensor reads low, and when glucose is falling, the sensor reads high. That rule was correct for unfiltered first- and second-generation sensors that only saw a delayed ISF signal. Modern sensors with a forward predictor can violate it. If the firmware extrapolates the trend by even one to two minutes, a fast drop with the predictor active can show a value lower than the laboratory truth at that instant — the opposite of the classic “falsely high on the way down” intuition. Dexcom’s G7 and Abbott’s Libre 3+ both build forward-prediction into their displayed values; the Welsh and colleagues comparison of fifth-, sixth-, and seventh-generation Dexcom sensors discusses this design shift explicitly.

The practical consequence is that “trust your fingerstick when symptoms disagree” remains good advice, but the reasoning needs updating: the disagreement is not always the CGM running behind; sometimes it is the predictor overshooting. If you feel low and your sensor reads 110 with two down arrows, treat — do not wait. The sensor is not the truth; it is a model of the truth that just admitted it is uncertain.

There is a longer treatment in predictive forecasting models.

Three failures that look like lag but are not

Several common CGM disagreements are misclassified as lag when they are actually distinct mechanisms. Knowing which is which changes what you do about it.

Compression lows. Sleeping on the sensor squeezes interstitial fluid out of the wire’s microenvironment. The local glucose around the electrode drops, the sensor reports a low that is not present in the rest of the body, and the trend line shows an artifactual cliff that recovers when you roll off. This is mechanical, not biological. Case series in Diabetes Technology & Therapeutics have documented compression artifacts mistaken for nocturnal hypoglycemia and over-treated with carbs.

A related write-up: firmware bugs in life-critical devices.

Warm-up MARD inflation. First-day-of-wear accuracy is meaningfully worse than steady-state accuracy on every CGM platform. Local tissue trauma from insertion, foreign-body response, and an under-equilibrated wire all drive up the relative error in the first 12–24 hours. Manufacturer pivotal data typically reports day-1 MARD separately from days 2–10 because it is several percentage points higher. If your first-day numbers feel off, they probably are — the lag is normal but the error band is wider.

End-of-life enzyme drift. Glucose oxidase degrades over the wear period. By the last day or two, the calibration mapping the firmware applied at start can drift, producing a slow systematic bias that looks like a persistent overread or underread. Sensor extensions (G6 and G7 used to allow a grace period; the 15-day G7 is now cleared with a 12-hour grace built in) have the worst error precisely at the tail. The fix is replacement, not finger-stick calibration.

Closed-loop systems: when you can’t “just check your finger”

For people on automated insulin delivery, the lag conversation has a different shape. The CGM value is not advice the wearer interprets — it is a signal feeding a controller that doses insulin every few minutes. Tandem’s Control-IQ uses a model-predictive controller that runs every 5 minutes, predicts glucose 30 minutes forward, and adjusts basal accordingly. Omnipod 5’s SmartAdjust predicts 60 minutes forward instead. Medtronic’s 780G SmartGuard adds automatic correction boluses on top of basal modulation when predicted glucose stays elevated.

All three controllers internalize CGM lag as a known parameter — the controller’s plant model treats sensor glucose as a delayed, filtered version of plasma glucose, and the predictor compensates. There is no user-facing “ignore the CGM and use the finger stick” path inside the dosing decision. The wearer can override total delivery (snooze a low alarm, deliver a manual bolus, suspend basal), but the underlying model still uses the CGM track. This is why iCGM clearance under 21 CFR 862.1355 matters: the FDA’s threshold of ≥87% within ±20% of laboratory reference is the accuracy floor a sensor has to clear before its data is trusted to drive a pump in an automated loop.

A decision rubric: when to trust the CGM, when to fingerstick, and when neither is the right answer

Reduce all of the above to operational rules:

- Trust the CGM when the trend arrow is flat, you are at least 24 hours into a sensor session, and your symptoms match the number. This is the steady-state regime where modern MARDs of 7–10% are realistic and lag is small.

- Trust the CGM but mentally apply the lag math when arrows are present. The number on screen is your reading from minutes ago; do the rate-of-change correction in your head before treating.

- Use a finger stick when symptoms disagree with the sensor (especially feeling low with a normal display), during the first 12–24 hours of a new sensor, when the trend line shows a sudden cliff that began while you were lying still (suspect compression), or before treatment decisions outside the iCGM-cleared range.

- Neither is the right answer in two situations. During very rapid swings the finger stick itself lags arterial glucose by 2–5 minutes, so neither device tells you ground truth — wait or recheck. And during sensor warm-up or end-of-life drift, both numbers can mislead and the right move is to replace the sensor, not to argue with it.

The takeaway is straightforward. The eight-minute disagreement between a CGM and a finger stick is not a flaw the device will eventually engineer away. It is the visible part of a lag budget the firmware deliberately chose, and the exact size of it depends on which sensor you are wearing, which day of wear, and how fast your glucose is moving. Read the trend arrows, use the rate-of-change rule, and replace the mental model that says “the CGM is just slow” with the one that says “the CGM is showing a filtered, predicted estimate of where I was several minutes ago.” That is the model the closed-loop pumps already use, and it is the one that lets a wearer dose, eat, and exercise with the device instead of around it.

If you want to keep going, raw biosignal smoothing is the next stop.

Frequently asked questions about CGM vs finger stick lag

How accurate is a modern CGM compared to a finger stick?

A modern CGM and a finger stick measure different compartments — interstitial fluid versus capillary blood — with different error budgets. CGMs report MARD around 7–10% across the day, while ISO 15197:2013 finger sticks must stay within ±15 mg/dL or ±15% of a reference. At steady state the bands overlap; during rapid swings the CGM lags by minutes while the finger stick itself lags arterial blood by 2–5 minutes. Neither is laboratory truth.

Can I calibrate my CGM with a finger stick to remove the 8-minute lag?

No. Calibration adjusts the offset and slope of the electrode-to-mg/dL mapping; it does not change the physical diffusion time of glucose into interstitial fluid. Modern factory-calibrated sensors like Dexcom G7 and FreeStyle Libre 3 do not accept user calibration at all, and even older sensors that did would still show the same physiological and filter-driven lag after a finger-stick entry was processed.

Why does my CGM disagree with my finger stick after a meal?

Post-meal disagreement is mostly the rate-of-change effect, not sensor failure. As glucose rises 2–3 mg/dL per minute, an 8-minute total lag produces a 16–24 mg/dL expected gap between blood and interstitial fluid. The forward-prediction filter inside modern CGM firmware narrows this gap but cannot eliminate it. The two devices are measuring the same person at different moments and in different fluid compartments, so a mismatch is normal during digestion.

Is it safe to dose insulin from a CGM reading alone?

For sensors cleared under FDA iCGM rules (21 CFR 862.1355), the labeled indication permits non-adjunctive dosing — meaning insulin decisions without a confirming finger stick. That clearance requires at least 87% of readings within ±20% of a laboratory reference. Outside steady state, during the first 24 hours of wear, or whenever symptoms disagree with the displayed value, a finger stick remains the recommended cross-check before dosing.

Further reading

- Rebrin and Steil, “Use of subcutaneous interstitial fluid glucose to estimate blood glucose: revisiting delay and sensor offset” — the lag-vs-rate equation underlying the arrow math.

- Alva et al., “Accuracy of the third generation of a 14-day continuous glucose monitoring system,” Diabetes Therapy, 2023 — FreeStyle Libre 3 pivotal MARD of 7.8%.

- “Accuracy of the 15.5-day G7 iCGM in adults with diabetes,” Diabetes Technology & Therapeutics, 2025 — extended-wear Dexcom G7 with 8.0% MARD.

- 21 CFR 862.1355, the FDA’s iCGM special controls — ±20% / 87% threshold for use in automated insulin dosing.

- “The myth of MARD,” Diabetes Technology & Therapeutics, 2024 — limits of using a single accuracy number to compare sensors.

- Schiavon et al., “Interstitial fluid glucose is not just a shifted-in-time but a distorted mirror of blood glucose,” in silico study confirming the lag is non-linear during fast swings.

- Tandem Control-IQ technology overview and Omnipod 5 SmartAdjust FAQ — how closed-loop controllers internalize CGM lag.