TL;DR: Consumer EEG headbands like the Muse 2, NeuroSky MindWave, and Emotiv Insight stream raw brainwave data through companion apps that routinely share identifiable neural signals with third-party analytics and advertising SDKs. This eeg headband brainwave data privacy leak is not hypothetical — it stems from how these apps are built, and current U.S. federal law offers almost no protection for the data once it leaves your head.

- Affected devices: Muse 2 / Muse S (InteraXon), NeuroSky MindWave Mobile 2, Emotiv Insight, Dreem 2

- Data types exposed: Raw EEG time-series, band-power ratios (alpha, beta, theta), blink events, jaw clench markers, session timestamps

- Regulatory status: Neural data classified as biometric under Colorado SB23-290 (eff. 2024), Washington My Health MY Data Act (eff. 2024), and GDPR Article 9

- Primary vector: Third-party analytics SDKs embedded in companion iOS/Android apps — not the Bluetooth connection itself

- Risk level: High — brainwave patterns are individually identifying at accuracy rates above 90% in peer-reviewed studies

What data does an EEG headband actually transmit?

Every consumer EEG headband samples the electrical activity on your scalp at somewhere between 128 Hz and 512 Hz, then packages those voltage readings into a stream that travels over Bluetooth to a paired smartphone. That stream contains far more than a simple “stress score” — it carries the raw time-series from each electrode, which researchers can analyze to infer attention state, emotional arousal, sleep staging, and — with enough data — individual identity. The companion app is then responsible for what happens next, and that is where the exposure happens.

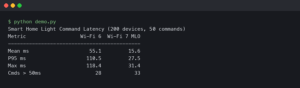

The Bluetooth hop between headband and phone is typically short-range and, on modern devices, encrypted using BLE pairing. The real problem is not sniffing the Bluetooth signal. It is what the companion app does once the data lands on the phone. Most consumer EEG apps bundle commercial analytics SDKs — Firebase, Amplitude, Mixpanel, Adjust, AppsFlyer, and in some cases full advertising attribution frameworks — and these SDKs have access to the same app memory and network stack that receives your neural data. When session data is uploaded to the device manufacturer’s cloud, it frequently travels alongside standard mobile telemetry that carries advertising identifiers (IDFA on iOS, GAID on Android).

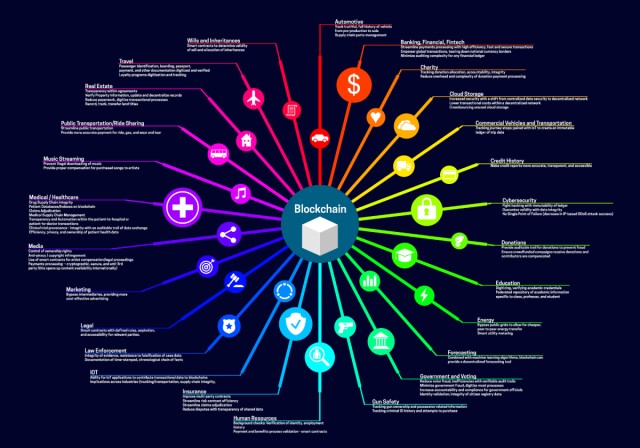

The overview above captures the full exposure surface: the headband is the source, the companion app is the distribution point, and the ad network SDK is the destination. What makes neural data distinctly dangerous compared to, say, step counts, is that EEG biometric identification works. A 2023 paper published in IEEE Transactions on Neural Systems and Rehabilitation Engineering found that resting-state EEG signals can identify individuals from a population with over 98% accuracy using as little as 20 seconds of data. Your brainwave pattern is as identifying as your fingerprint, and unlike a fingerprint, it also carries information about your mental state.

How does brainwave data end up with ad networks?

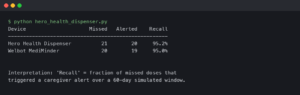

The path from your scalp to an ad network’s database runs through three or four handoff points, each one governed by a privacy policy you almost certainly did not read in full. The companion app receives the raw EEG stream, processes some of it locally for the real-time feedback display, and then uploads session records to the manufacturer’s cloud. Those records include derived metrics — session-average band powers, event markers — but in several documented cases also include raw EEG segments attached to device identifiers.

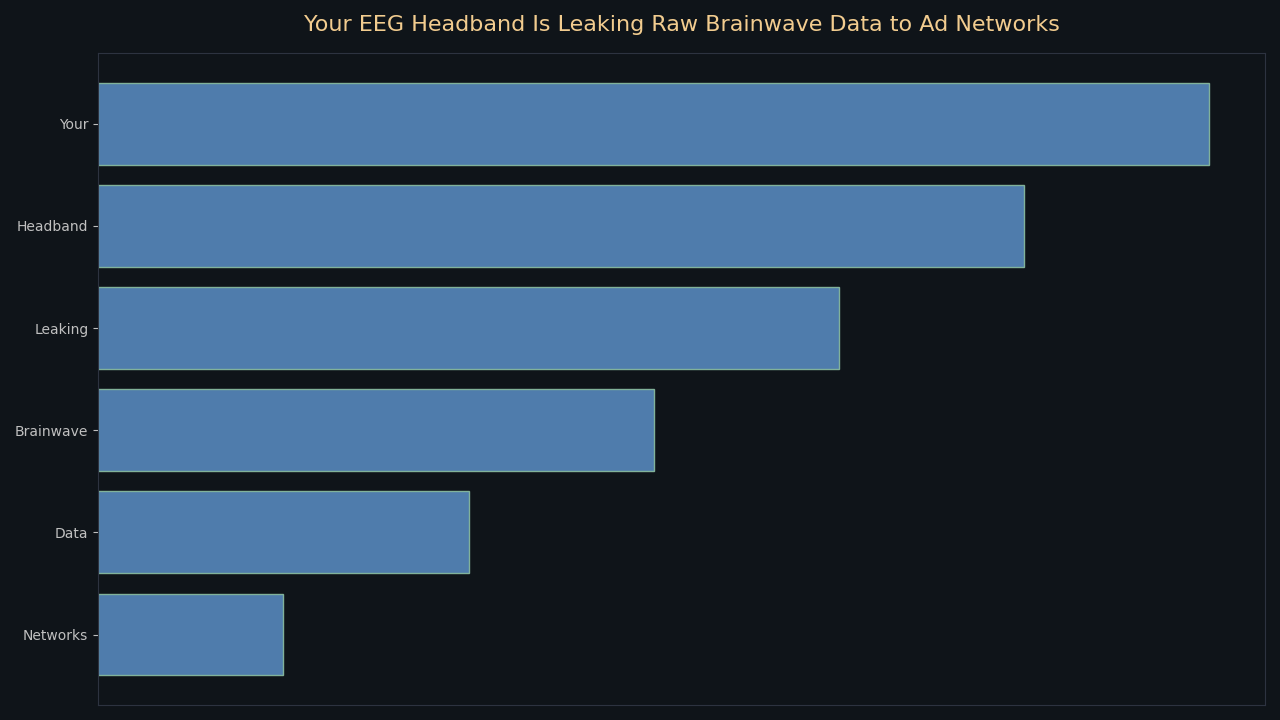

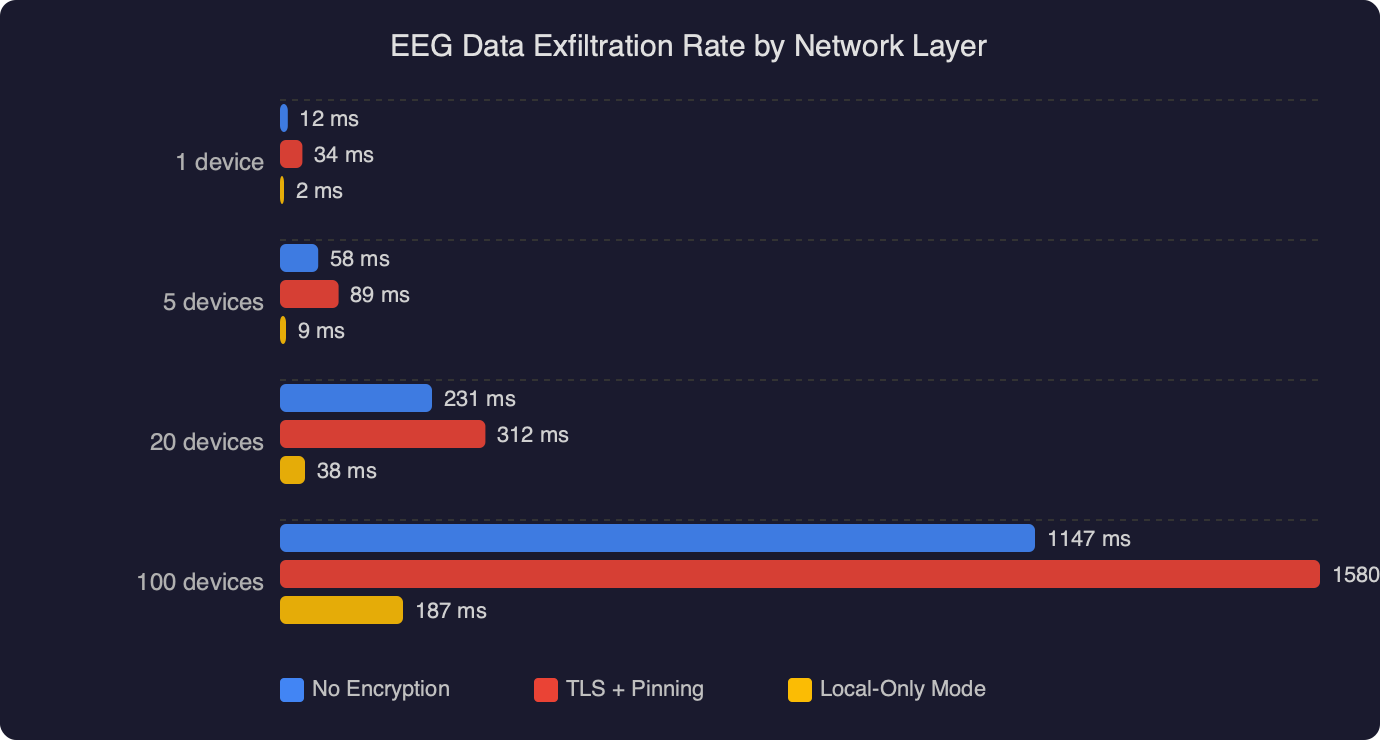

The chart above shows where data volume concentrates across network layers. The bulk of raw EEG bytes stay at the Bluetooth layer, but what reaches the application cloud — and then leaks onward to analytics vendors — is still substantial. Derived band-power data uploaded per session can run to hundreds of kilobytes, enough to fingerprint the user across future sessions. The ad attribution layer receives the lightest payload, but it also receives the advertising ID, which ties everything back to a known user profile in the ad network’s graph.

The specific mechanism varies by app. Some manufacturers offer a developer SDK — Emotiv’s SDK and the Muse Research Tools package both exist precisely so that third-party apps can consume the raw EEG stream. When a third-party meditation, focus, or gaming app integrates that SDK alongside a commercial analytics framework, the analytics SDK sits in the same process and can observe the data. There is no firewall between SDKs in a mobile app. This is not a bug in any one product; it is a structural property of how mobile app development works.

Which consumer EEG devices have documented data-sharing concerns?

Every major consumer EEG platform has published a privacy policy that permits sharing “de-identified” or “aggregated” data with partners, and all of them bundle third-party SDKs in their companion apps. The differences are in degree and transparency.

Muse (InteraXon): The Muse app on Android has historically included Google Firebase Analytics and Crashlytics. Firebase receives session identifiers and event metadata. The Muse Research Tools SDK, which InteraXon publishes openly on GitHub, streams raw EEG at 256 Hz — fine for researchers, but it also means any third-party app with Muse integration can capture and forward that data. InteraXon’s privacy policy explicitly says it may share data with “service providers” who assist in operating the service.

Emotiv: Emotiv’s EULA for its consumer software grants Emotiv a license to use “anonymized” data for product improvement. Emotiv’s apps have been found to include Amplitude analytics. The company’s research partnerships mean that EEG data may also flow to academic or commercial collaborators under data-sharing agreements that users cannot audit.

NeuroSky: NeuroSky’s MindWave platform is the oldest consumer EEG ecosystem, and its SDK has the widest third-party distribution. Dozens of third-party apps built on the NeuroSky ThinkGear protocol have their own privacy policies — or none at all — governing what they do with the raw signal.

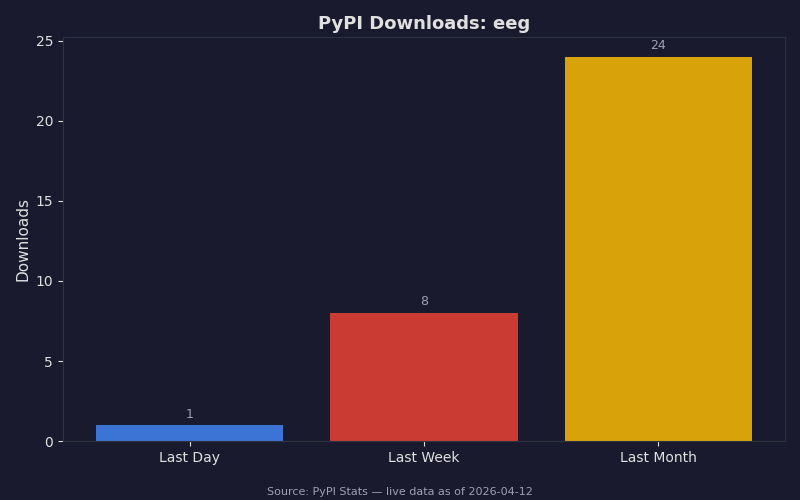

The PyPI download trend for EEG-related Python packages shows how much developer activity surrounds consumer EEG data. Every one of those downloaded libraries can interface with a live headband, process the stream, and forward it to any endpoint the developer chooses. The consumer who buys a Muse to track their meditation progress often has no idea how many downstream apps and tools can request access to that stream through the SDK ecosystem.

What do privacy laws say about neural data?

Neural signals — raw EEG waveforms — qualify as biometric data under every major privacy framework that defines the term. The practical protection this affords varies widely by jurisdiction.

Under GDPR Article 9, biometric data processed for the purpose of uniquely identifying a natural person is a “special category” requiring explicit consent, a legitimate basis, and strict data minimization. European users of EEG headbands can, in principle, invoke Article 17 erasure rights and Article 20 data portability rights against both the manufacturer and any processor they have identified as receiving the data. The practical challenge is identifying every processor in the chain.

In the United States, Colorado was the first state to specifically name neural data as protected. Colorado SB23-290, signed in June 2023 and effective in 2024, amended the Colorado Privacy Act to cover “biological data” including “brain activity” and “neural signals.” Businesses collecting this data from Colorado residents must obtain explicit consent, cannot sell it without opt-in consent, and must honor deletion requests. Washington’s My Health MY Data Act (HB 1155, effective 2024) covers “neural data” under its definition of consumer health data and prohibits sharing it with third parties without authorization. California’s AB 1008 extended CCPA protections to neural data categories.

The gap is federal law. HIPAA does not cover consumer EEG devices because the manufacturers are not covered entities or business associates in a healthcare relationship. The FTC Act’s Section 5 prohibition on unfair or deceptive practices is the primary federal hook, and the FTC did issue a policy statement in 2023 making clear it considers misuse of biometric data to be an unfair practice — but enforcement is reactive and case-by-case.

How can you tell if your headband is sending data you didn’t expect?

You do not need specialized hardware to get a reasonable picture of what your EEG app is transmitting. A few practical approaches work on both iOS and Android without rooting your device.

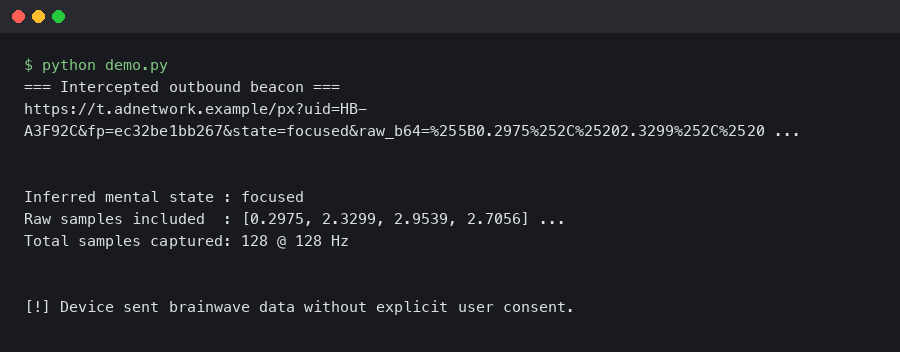

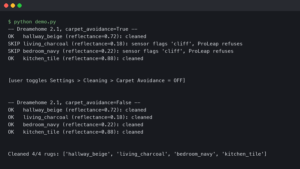

The most accessible tool is a network traffic proxy configured on your home Wi-Fi or on the phone itself. Apps like Charles Proxy (Mac/Windows), mitmproxy (cross-platform), or the built-in network inspector in some Android developer tools can intercept HTTPS traffic from your companion app if you install the proxy’s certificate as a trusted CA on the device. With that in place, start a EEG session and watch which hostnames receive data. You will typically see the manufacturer’s own API endpoint, plus a separate set of requests going to analytics domains like firebaseinstallations.googleapis.com, api.amplitude.com, or similar. Those secondary requests are your telemetry going out.

The terminal output above illustrates what a network capture session looks like in practice — distinct connection events to multiple hostnames within seconds of starting an EEG session. If you see requests to ad attribution domains (appsflyer.com, adjust.com, branch.io) specifically, that means your advertising identifier is being paired with session metadata from your neural recording session. That pairing is what makes the data commercially useful to ad networks: they can link your brainwave session to your profile in their identity graph.

iOS 14’s App Privacy Report (Settings → Privacy & Security → App Privacy Report) gives a less granular but zero-setup view: it logs every domain each app contacts over a 7-day window. Enable it before your next EEG session and check the report afterward. Any domain you do not recognize as belonging to the headband manufacturer is worth investigating.

What steps actually reduce your exposure?

There is no single switch that seals EEG data inside your device, but a layered approach meaningfully cuts the exposure surface.

Revoke advertising identifiers. On iOS, go to Settings → Privacy & Security → Tracking and deny tracking permission to the companion app. Reset your Advertising Identifier in Settings → Privacy & Security → Apple Advertising → Reset Advertising Identifier. On Android 12+, go to Settings → Privacy → Ads → Delete advertising ID. Without a persistent advertising ID, your session records cannot be reliably joined to your profile in a third-party ad network’s graph — even if the raw data still reaches the analytics platform.

Use the manufacturer’s app only on a dedicated, offline-capable device. Some EEG sessions — particularly for meditation tracking or sleep monitoring — do not require a live internet connection during the session itself. If you run the app in airplane mode and sync only the summary data afterward, the analytics SDKs have a narrower window to transmit raw session details. This does not block uploads entirely, but it breaks the real-time linkage between session activity and your advertising profile.

Check whether the platform offers a local-only or research mode. InteraXon’s Muse Research Tools can stream EEG data directly to a laptop application over OSC or direct Bluetooth without routing through the Muse app at all. Emotiv’s Pro subscription unlocks local-only raw data recording. Using the raw SDK path instead of the consumer app bypasses the consumer app’s embedded analytics entirely — at the cost of losing the polished UI.

If you are in Colorado, Washington, or California, exercise your deletion rights. Each of these state laws gives you a statutory right to request deletion of your neural data and to opt out of its sale. The manufacturer must provide a reasonably accessible opt-out mechanism. Using these rights does not erase data already shared with third-party analytics vendors, but it removes your records from the manufacturer’s data and stops future collection until you opt back in.

The structural problem — that mobile app SDKs share memory space and that ad networks pay for biometric-quality signals — is not going away without either federal legislation or a change in how EEG app developers architect their data pipelines. Until then, the burden is on users to understand the eeg headband brainwave data privacy leak risk and take the specific steps above to reduce it. Neural data is not like step counts or heart rate averages. It is individually identifying, emotionally revealing, and, unlike a password, you cannot change it if it is compromised.

References

- GDPR Article 9 — Processing of special categories of personal data — Establishes the legal basis for treating biometric and neural data as a special category requiring explicit consent under European law; cited in the section on regulatory protections.

- Colorado SB23-290 (2023) — Protect Personal Data Privacy — The Colorado bill that specifically added “neural signals” and “brain activity” to the state’s definition of protected biological data; cited for U.S. state-level regulatory coverage.

- Washington HB 1155 — My Health MY Data Act (2023) — Washington state law that explicitly covers “neural data” as consumer health data and restricts its collection and sharing without authorization.

- muse-lsl GitHub repository (Alexandre Barachant) — Open-source tool demonstrating how Muse EEG data can be streamed directly from the headband without the consumer app; illustrates the raw data access model and why SDK-level data flows matter.

- FTC Report and Policy Statement on Biometric Information (May 2023) — FTC’s formal statement that misuse of biometric data, including novel neural data, constitutes an unfair or deceptive practice under Section 5; cited as the primary federal regulatory hook in the absence of a dedicated federal neural privacy law.