I was standing in my driveway at 11 PM last Tuesday, staring at a dead motion sensor that had dropped off my 2.4GHz network for the fourth time that week. It was supposed to trigger the floodlights. It didn’t. And I ended up stubbing my toe on a stray brick in the dark.

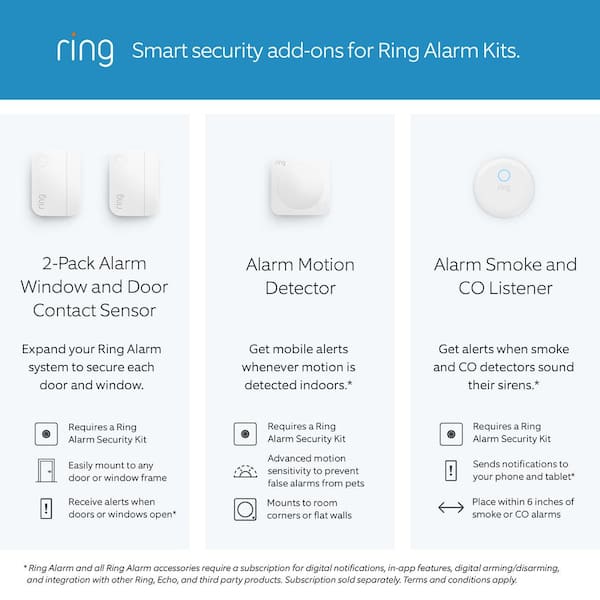

If you build smart home setups, you already know the pain. Wi-Fi is a power hog — period. It was never designed for tiny plastic boxes running on coin-cell batteries, yet hardware manufacturers spent the last decade trying to force it to work. You either dealt with devices dying every two months, or you bought into a proprietary Zigbee or Z-Wave ecosystem that required a dedicated hub plugged into your router.

But things finally started shifting earlier this year. The post-CES 2026 hardware wave is aggressively abandoning traditional Wi-Fi for low-power, long-range protocols operating in the 900 MHz band. These devices don’t need a hub. They don’t need your Wi-Fi password. They just wake up, find a compatible neighborhood network mesh — like Amazon’s Sidewalk protocol — and start broadcasting.

The Battery and Range Reality Check

I ripped out my old Wi-Fi contact sensors last month and installed a batch of these new long-range units on my perimeter gates. Well, that’s not entirely accurate — I tied them into my local setup, tested on a Raspberry Pi 5 running Home Assistant OS 12.0.

The difference in power consumption is absurd. My old Wi-Fi units dropped about 14% battery a week just maintaining the connection keep-alives. The new 900 MHz hardware? Barely 1.2% over the same period. More importantly, they actually stay connected. My farthest gate is 140 feet from the house through two brick walls. The Wi-Fi sensor ghosted offline at exactly 74 feet from my nearest AP. The new protocol doesn’t even sweat the distance.

But there is a catch nobody mentions in the marketing copy. Bandwidth.

The Edge AI Gotcha

Because these long-range networks max out at around 80 Kbps, you absolutely cannot send raw video or complex data to the cloud for processing. The pipe is just too small.

This is where local AI comes in, and it’s a double-edged sword. Manufacturers are cramming tiny neural processing units (NPUs) into the sensors themselves. Instead of sending a video clip of a moving object to a server to figure out if it’s a person or a stray cat, the sensor runs a lightweight model locally. It only transmits a tiny text payload over the 900 MHz network: {"event": "person_detected", "confidence": 0.89}.

Here’s what actually happens in practice: If the local chip misidentifies a raccoon as a human, you get an alert. But because the device isn’t on Wi-Fi, you don’t get the snapshot to verify it immediately. To see what triggered the sensor, the system has to wake up the device’s secondary Wi-Fi radio (if it has one) or you have to pull up a separate hardwired camera feed. In my testing, waiting for the sensor to negotiate a high-bandwidth connection just to send a confirmation image adds about a 3.5-second delay to my automation flows.

That doesn’t sound like much until you’re waiting in the dark for the lights to turn on.

Where We Go From Here

I don’t think I’ll ever buy another Wi-Fi-dependent battery sensor. The trade-offs just aren’t worth it anymore.

The local AI models are getting less stupid by the month. I probably expect by Q1 2027, we’ll see edge models capable of running multi-object tracking locally without needing a cloud fallback at all. Right now, you just have to accept that your long-range sensors are highly opinionated little black boxes. They tell you what they think they saw, and you have to trust them.

If you’re still fighting with 2.4GHz extenders just to keep your mailbox sensor online, stop. The networking hardware has finally caught up to the reality of how houses are actually built. Just don’t expect these things to stream 4K video from a mile away.

Frequently asked questions

How much battery do 900 MHz long-range IoT sensors save compared to Wi-Fi sensors?

In side-by-side testing on a Raspberry Pi 5 running Home Assistant OS 12.0, old Wi-Fi contact sensors drained roughly 14% battery per week just maintaining keep-alive connections. The new 900 MHz hardware used barely 1.2% over the same period. The savings come from avoiding constant Wi-Fi handshakes, letting tiny coin-cell-powered sensors stay alive far longer between battery swaps.

Why can’t long-range 900 MHz sensors send video to the cloud?

Long-range IoT networks like Amazon Sidewalk operating in the 900 MHz band max out around 80 Kbps, which is far too narrow a pipe for raw video or complex data streams. Instead, sensors run lightweight neural models locally on built-in NPUs and transmit only tiny text payloads such as {“event”: “person_detected”, “confidence”: 0.89} over the mesh.

What is the real-world range of 900 MHz smart home sensors through walls?

In the author’s setup, a 900 MHz sensor mounted on a perimeter gate 140 feet from the house held a stable connection through two brick walls without trouble. By comparison, the previous Wi-Fi contact sensor dropped offline at exactly 74 feet from the nearest access point. The lower frequency penetrates building materials far better than 2.4GHz Wi-Fi.

How do you verify what triggered a long-range motion sensor without Wi-Fi?

Because the 80 Kbps link can’t carry images, confirming a trigger requires waking the sensor’s secondary Wi-Fi radio (if equipped) or pulling footage from a separate hardwired camera feed. In the author’s testing, negotiating that high-bandwidth handoff to deliver a confirmation snapshot added roughly a 3.5-second delay to automation flows, noticeable when waiting in the dark for floodlights.